[Update June 13, 2006]: This work received the "Best Robotics Paper" award at Computer and Robot Vision 2006. The paper is here:

- Design and analysis of a framework for real-time vision-based SLAM

using

Rao-Blackwellised particle filters, Robert Sim, Pantelis Elinas,

Matt Griffin, Alex Shyr, and James J. Little

Proceedings of the 3rd Canadian Conference on Computer and Robotic Vision (CRV 2006), Quebec City, QC, 9 pages, 2006. [ pdf ]

[Update Feb 9, 2006]: Our latest ICRA paper describing this work:

- σSLAM: Stereo Vision SLAM Using the

Rao-Blackwellised Particle Filter and a Novel

Mixture Proposal Distribution, Pantelis Elinas, Robert Sim, and James J. Little

Proceedings of the IEEE International Conference on Robotics and Automation, Orlando, FL, 7 pages, 2006. [ pdf ]

- Robert Sim, Matt Griffin, Alex Shyr, and James J. Little, "Scalable real-time vision-based SLAM for planetary rovers", Proceedings of the IEEE IROS Workshop on Robot Vision for Space Applications, Edmonton, AB, 6 pages, 2005. [ pdf ]

- Robert Sim, Pantelis Elinas, Matt Griffin, and James J. Little, "Vision-based SLAM using the Rao-Blackwellised Particle Filter", IJCAI Workshop on Reasoning with Uncertainty in Robotics (RUR), Edinburgh, Scotland, 8 pages, 2005. [ pdf ]

Some map construction movies (click to view, DivX codec required for windows users: www.divx.com): Older results below..

[Update November 11, 2005 ]: New results- Movie of SLAM using a mixture

proposal.

| |

UBC Collaborative Robotics Lab (6

DOF, no odometry, mixture proposal distribution) [input

sequence**] [input

sequence**] |

UBC Laboratory for Computational Intelligence (West)

(3 DOF, using odometry) [input

sequence**] [input

sequence**] |

** for full stereo input sequences and calibration information, please send me an email.. simra @ cs . ubc . ca

[Update July 21, 2005]: Much of the text below is outdated (of course). The key advances in the last few months is that now we can handle six degrees of freedom, we've implemented a mixture proposal distribution to detect loop closure, and we have a much more robust system in general. More details to come. :-)

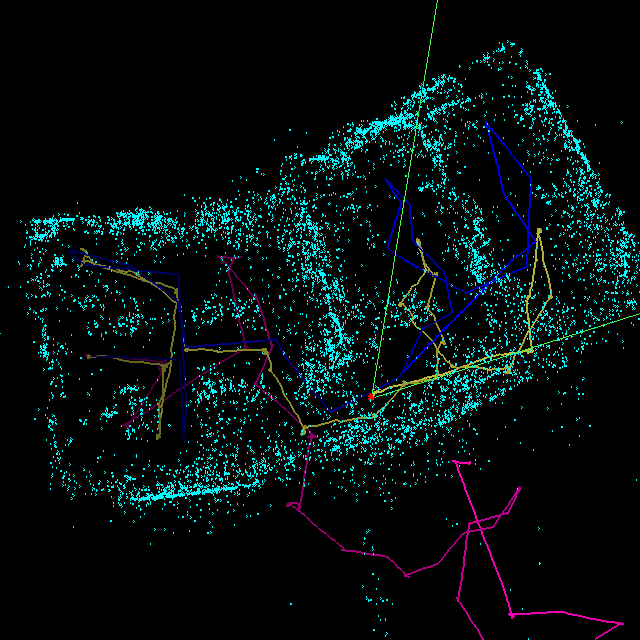

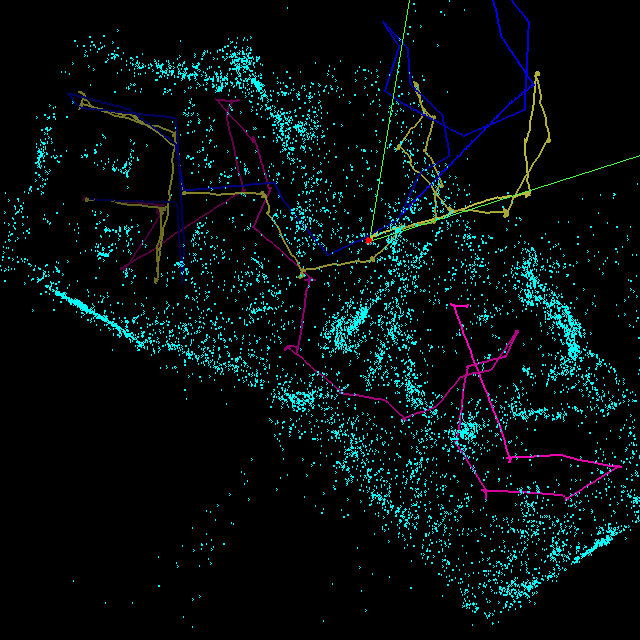

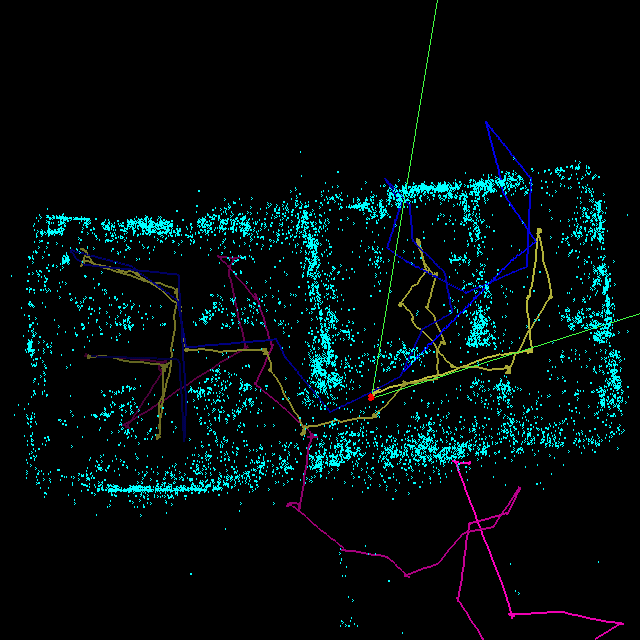

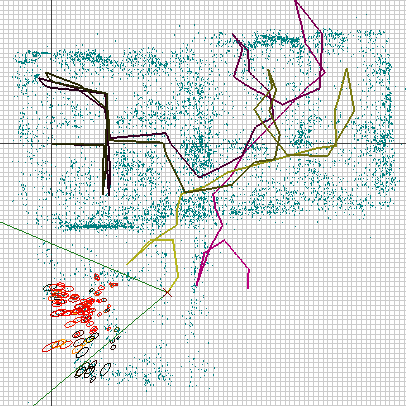

This is joint work with Pantelis Elinas, Matt Griffin, Alex Shyr and Jim Little. The map above was constructed using a Rao-Blackwellised particle filter (RBPF) with 400 particles. The maps are 3D landmarks constructed from SIFT features. The key contribution of this work is that where most RBPF implementations for SLAM rely on odometric observations for a motion model, we rely on motion estimates computed directly from sequential pairs of stereo images (similar to FastSLAM 2.0, but without taking into account *any* odometric information). This map consists of roughly 11,000 3D landmarks, associated with a subset of 38,000 SIFT features (SIFT features observed more than 3 times are promoted to landmarks). It should be evident that the robot traversed between two rooms. The computation finished after 4000 frames. We haven't tried smaller numbers of particles, and it is quite possible that the map quality will be just as good. The total trajectory length is 65.7m. The room is approximately 18m by 9m in size. Average compute time was 11.9s/frame. However, for memory-related issues we are not using fast methods for SIFT correspondence (such as a kd-tree), nor have we spent much effort on optimization.

Sample Results

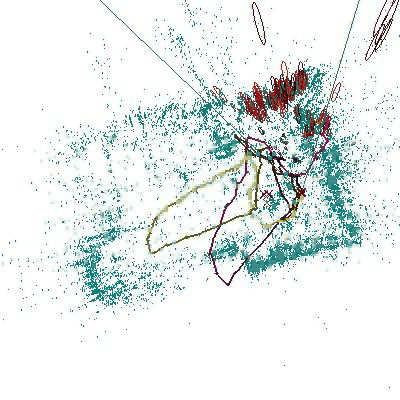

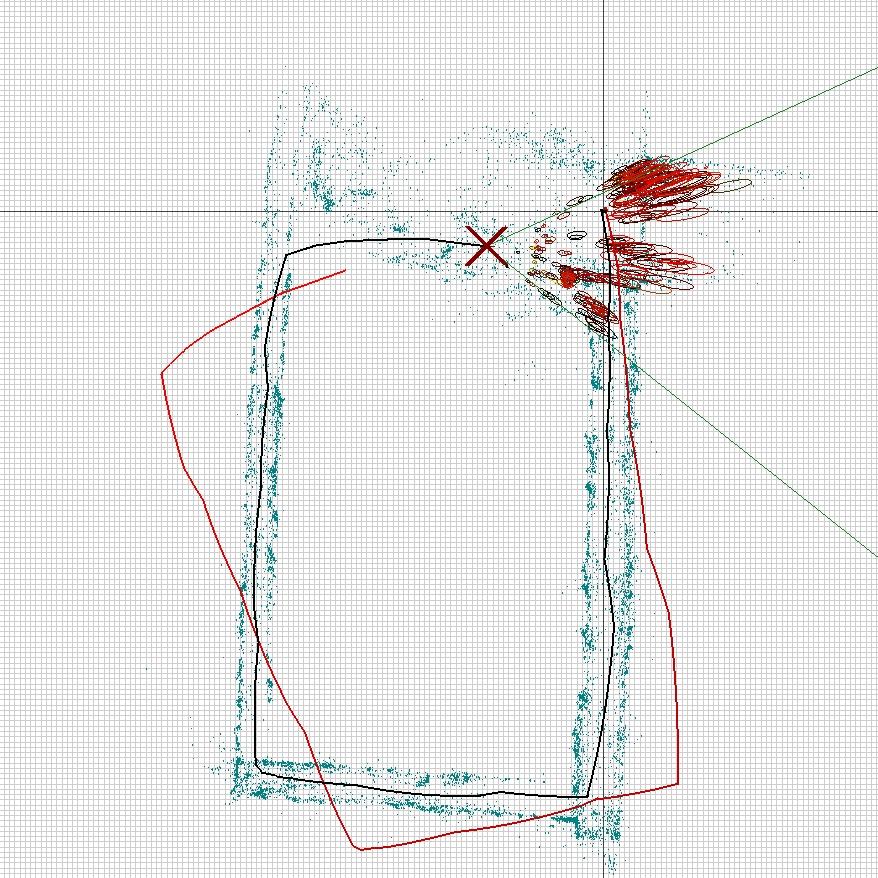

[Oct 25, 2005]: Here's a run where the robot successfully closes a loop (about 30m tall), 3000 frames, using 500 particles, vision-based odometry and a mixture proposal distribution for global localization.

More Results

Legend:| Yellow: | Max-weight Particle trajectory |

| Blue: | Robot odometer |

| Pink: | Visual odometer (see below) |